Data teams still spend far too much time on preparation rather than generating insights. According to Forrester, analysts devote nearly 70% of their time preparing external data rather than analyzing it. You can imagine how that slows down insights and blows resources.

But wrangling and ingesting data doesn’t have to cover a major part of your workflow. And the modern data stack solves this problem. Built as a modular, cloud-first, and scalable alternative to monolithic legacy platforms, it allows teams to focus on insights instead of manual preparations.

That’s where Windsor.ai comes in. With Windsor’s ELT/ETL connectors, extracting and loading data into cloud warehouses takes just minutes. Windsor streams analysis-ready data from 325+ sources directly into any destination, making data integration tasks faster and easier than ever.

Read on to learn why the modern data stack matters, the components it consists of, and what role Windsor.ai plays here to help data teams streamline workflows up to 10x.

What is the modern data stack?

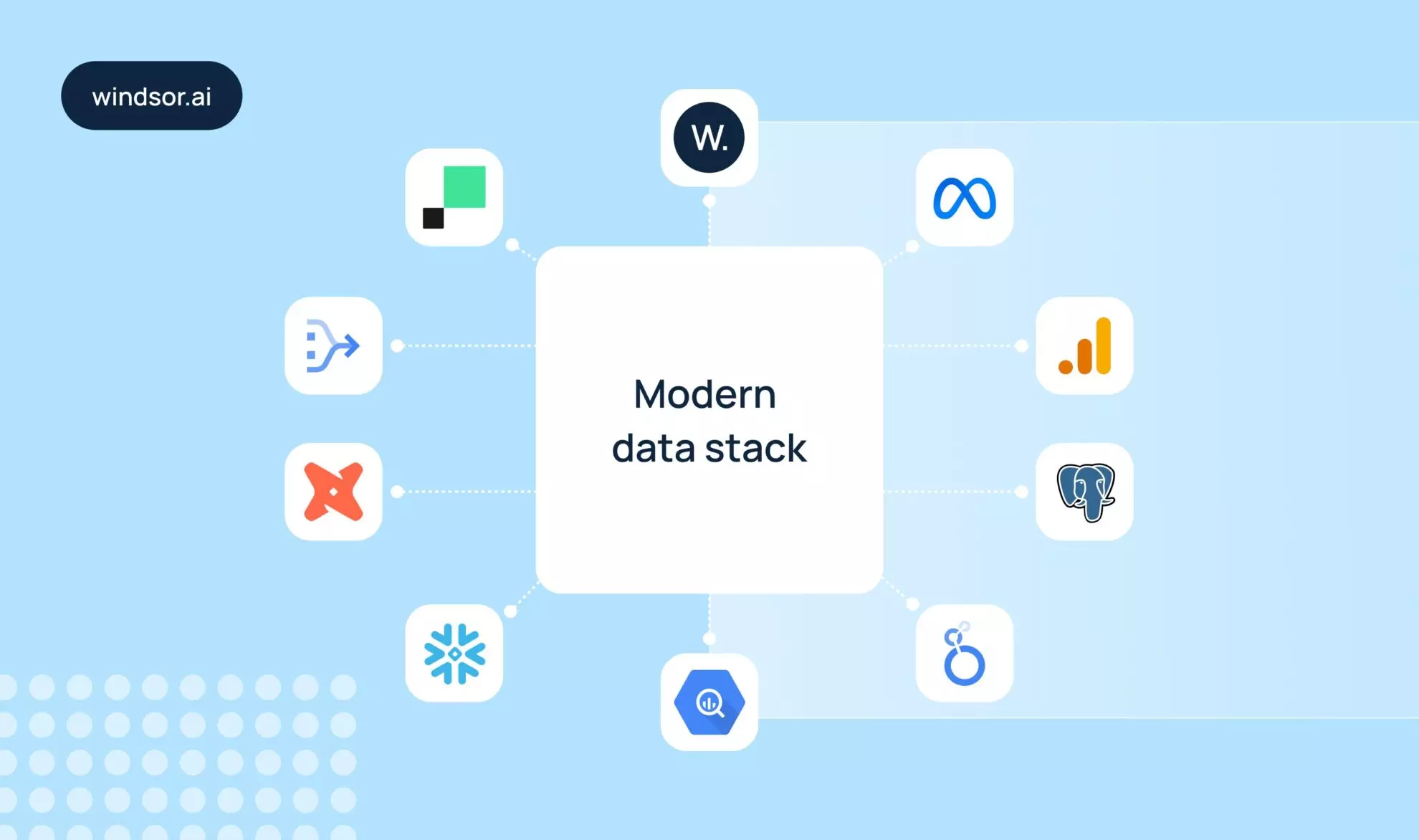

The modern data stack is a set of advanced cloud-native tools that are used together to aggregate, transform, store, and analyze data. This approach is not based on a single rigid pipeline, as the older systems did. Rather, it relies on specialized services that interconnect via APIs and cloud infrastructure, making the entire process of building data pipelines more flexible, scalable, and easier to use.

Also, it enables teams to eliminate the tedious manual processes with fully automated solutions. Now, you don’t have to spend hours building and managing infrastructure.

That’s how you start with tools like Windsor to sync multiple data sources and send them to powerful data warehouse tools such as BigQuery, Snowflake, Databricks, or Redshift, where the data can be transformed and prepared for analysis. Then, a modern data stack can be extended with BI tools such as Looker Studio, Tableau, or Power BI.

Each tool serves as a layer and performs a unique function, while the entire system stays in harmony and ensures flawless pipelines. This is why the modern data stack strategy has replaced the legacy solutions like traditional ETL (extract, transform, load) pipelines.

Why the modern data stack replaced legacy ETL

The standard approach in the past was legacy ETL pipelines. They pulled, converted, and loaded data into on-premise warehouses. However, these systems were quite costly, difficult to manage, and overly rigid. The underlying custom scripts used to bind teams to hard-coded workflows.

The move to ELT (extract, load, transform) as the modern data stack approach addressed these issues. New tools are API-first and cloud native. You can configure and use them with no programming skills. In addition, the ELT pipelines enable modular connections, so you can select the most appropriate tool for every layer.

Ultimately, the modern data stack decouples ingestion, transformation, and analytics, bringing more flexibility. Now, you don’t need to tear down a whole pipeline to replace one layer. Migrating from Redshift to Snowflake, as an example, doesn’t require you to rewrite all your dashboards. This time-saving modularity minimizes technical debt.

Key features of a modern data stack

A modern data stack embodies several essential features:

- Scalability: You can begin small and scale with the growing data needs.

- Flexibility: A modular design allows simple replacement or addition of tools.

- Cloud-first: Tools run on cloud infrastructure without a heavy on-prem setup.

- API-based: Simple interservice integration.

- Real-time syncs: Data is regularly updated through incremental loads.

These capabilities allow teams to act and change approaches swiftly based on shifting requirements. Whether you’re a startup that needs to build your own analytics pipeline from scratch or a mid-size company that needs to upgrade existing systems, a modern data stack is a proven way to achieve all these goals.

Key benefits of modern data platforms

The advantages of modern data platforms are not limited to their technical advances mentioned above. They deliver direct business impact through benefits like:

- Faster insights: With fully automated solutions in place, analysts spend less time wrangling data and more time analyzing it.

- Lower costs: Cloud-based pricing models replace expensive on-premise hardware and maintenance, which often includes support systems like network devices or even components from external providers such as wellpcb.com.

- Improved access: BI tools make data easily available and clear to non-technical users across the organization.

- Vendor flexibility: Modular architecture avoids lock-in, letting you swap or add tools as needs change.

- Ease of setup: Even non-technical users like marketers or business owners can connect new data sources and tools without heavy technical skills, thanks to the no-code connectors and AI in the workplace, making these capabilities more intuitive than ever.

By adopting a modern data stack, organizations become more agile and responsive. Decision-making accelerates, scaling becomes simpler, and data pipelines stay reliable and future-ready, which is a significant shift from the constraints of legacy systems.

Key components of the modern data stack

The modern data stack has a layered architecture, where each part (tool) serves a distinct purpose. This modular design enables startups, mid-size companies, and enterprises to scale their data operations without heavy engineering resources or long implementation timelines.

Let’s break down each component of the modern data stack along with practical tool examples to show how the pieces fit together.

| Layer | What it does | Examples |

| Data sources | Generates raw data from CRMs, ad platforms, web apps, files, and databases. | Salesforce, Google Ads, HubSpot, MySQL |

| Data integration (ELT) | Extracts and loads data from sources into your warehouse with zero engineering effort through no-code connectors. | Windsor.ai, Fivetran, Airbyte, Stitch |

| Data warehouse | Centralizes and stores all extracted data for further processing and analysis at scale. | BigQuery, Snowflake, Amazon Redshift, Databricks |

| Data transformation | Cleans, models, and prepares data into analysis-ready formats with version control. | dbt, Dataform |

| BI / analytics | Enables teams to explore, visualize, and share insights from the data. | Tableau, Looker, Power BI, Metabase |

| Reverse ETL | Pushes processed data back into operational tools (like CRMs or ad platforms) for activation. | Hightouch, Census, Grouparoo |

In this architecture, every layer plays a critical role. Raw data originates from various sources, integration tools extract and transfer it to a central destination, warehouses store and organize it, BI and analytics tools generate insights, and reverse ETL pushes those insights back into source apps.

This multi-layered design lets you quickly adapt pipelines as business requirements evolve. Instead of investing in a single monolithic tool, as legacy systems required, you can choose the most suitable tool for each layer of the modern data stack.

The result is unlimited flexibility. If your pipeline becomes too costly to maintain, you can swap providers. If a BI tool no longer meets your needs, you can test another without disrupting the whole stack. This modular approach reduces risk, speeds up insight generation, and supports continuous optimization.

Where Windsor.ai fits in the modern data stack

Windsor.ai is a leading data integration platform designed to simplify and accelerate the building of ELT and ETL pipelines. It brings affordability and speed to the modern data stack by syncing data from more than 325 sources into major cloud data warehouses and offering native integrations with BI tools. All of this happens through one no-code platform, so teams can focus on analysis instead of managing APIs and infrastructure.

Introducing Windsor.ai as the integration layer

A modern data stack relies on a robust integration layer to keep pipelines reliable, fresh, and fast. This tool should also remove the burden of manual data collection and uploads by automating the most time-consuming parts of the workflow. That’s exactly where Windsor.ai steps in.

Windsor.ai syncs data from 325+ sources, including marketing and sales platforms, accounting apps, CRMs, and analytics tools such as Google Ads, Meta Ads, Salesforce, and HubSpot.

Through secure OAuth connections, Windsor pulls clean, structured data directly into your cloud warehouse (BigQuery, Snowflake, Redshift, or Databricks) without a single line of code. Our no-code connectors handle automated schema mapping and normalization, eliminating engineering bottlenecks and accelerating insights. No more CSV exports or broken APIs.

Windsor also provides native integrations with BI tools. You can build live dashboards in Looker Studio, Tableau, or Power BI on top of your integrated data.

Use cases range from multi-channel campaign monitoring and CRM-ad data blending to automated executive reporting. By uniting fragmented information into a single source of truth, Windsor.ai becomes a fundamental part of the modern data stack.

Key features of Windsor.ai

Windsor.ai makes data integration simple, flexible, and cost-effective thanks to these features:

- 100% no-code setup: Connect multiple data sources and load them into any destination in just minutes.

- Pipeline health monitoring: Automatic schema drift detection, authentication refresh, and retry mechanisms keep your pipelines stable and reliable.

- Incremental syncs & scheduling: Update datasets as frequently as needed without overloading your warehouse, maintaining high performance and lower costs.

- Clustering & partitioning support: Optimize queries and storage usage, so you’re not paying for unused capacity.

- No premium connectors: Every data source and destination is available on every plan, starting at just $19/month after a 30-day free trial, while the majority of top ELT tools charge $500+.

Examples of modern data stack with Windsor.ai

Here are some practical ways teams use Windsor.ai as their data integration layer:

1. CRM → Windsor.ai → BigQuery → dbt → Looker

Windsor extracts customer and deal data from your CRM and loads it into BigQuery, where dbt handles transformation. The cleaned datasets are then visualized through Looker Studio dashboards.

2. Marketing stack: Google Ads + Meta Ads + LinkedIn Organic → Windsor.ai → Snowflake → Power BI

Windsor.ai blends advertising and organic data from multiple platforms and loads it into Snowflake for secure, scalable storage. From there, data flows into Power BI for downstream analytics and visualizations.

3. Facebook Ads → Windsor.ai → BigQuery → dbt

You can easily automate Facebook Ads campaign reporting by using our dbt + BigQuery package.

These examples, and thousands more, are possible thanks to Windsor.ai’s 325+ supported sources and 20+ destinations, making it the central hub of your modern data stack.

Best practices for building a modern data stack

Follow these five simple yet effective principles to build a powerful, future-proof data stack:

Use cloud-native and modular components

Start with cloud-native solutions. They minimize overhead and scale more easily than legacy systems. Equally important is modularity: a modern data stack should let you swap out parts without disrupting workflows. For example, you should be able to use BigQuery today and move to Snowflake tomorrow with minimal friction.

Avoid hard-coding ETL pipelines

Hard-coded ETL pipelines are rigid and costly to maintain. Instead, build pipelines with modern integration platforms like Windsor.ai, which offer ready-to-use connectors that automatically handle authentication, schema drift, and retries. This reduces engineering overhead and keeps pipelines stable.

Use incremental updates to control costs

Refreshing entire datasets every time in a cloud data warehouse is expensive and time-consuming. Incremental loading updates only new or changed records, reducing storage and compute costs while accelerating queries, and it complements a ransomware-proof backup solution as part of data resilience.

Document data models with dbt

Poorly documented data models create confusion. dbt lets you manage transformations, document models, and track lineage under version control. Adding dbt to your stack increases trust in your data and preserves institutional knowledge as teams grow or change.

Use BI tools that support semantic layers

Analytics is the final layer. Choose BI tools with robust semantic layers, such as Looker Studio, Tableau, or Power BI, to ensure stable, consistent reporting. A semantic layer standardizes definitions across departments so everyone works from the same trusted numbers.

How to define your best-fit data stack? (Checklist)

Use this quick checklist to select the most suitable tools for your data stack based on your current infrastructure, channels, and business needs:

1. Identify data sources

List all existing sources from which you need to pull data (CRM, marketing platforms, analytics tools, databases, etc.).

2. Choose a data integration tool

- Ensure the tool can connect to all your identified sources.

- Confirm it supports no-code setup, automatic schema mapping, incremental loading, and secure authentication (OAuth).

3. Select a cloud data warehouse

- Evaluate the platform’s scalability, performance, and cost.

- Verify its compatibility with your integration platform and BI tools.

4. Define the data transformation layer

Use tools that support version-controlled transformations, documented data models and lineage, and automated data quality tests.

5. Choose BI & analytics tools

- Ensure semantic layer support for consistent metrics across departments.

- Confirm seamless connectivity with your warehouse.

6. Plan for reverse ETL (optional)

- Identify operational apps that require enriched data from your warehouse.

- Select a reverse ETL tool compatible with these apps.

7. Validate scalability and efficiency

- Confirm the created stack can scale without heavy engineering overhead.

- Ensure it supports rapid insights and reliable pipelines.

Conclusion

The modern data stack delivers the speed, flexibility, and scalability that legacy systems simply can’t match. It shifts the focus from manual setup to actionable insights, enabling teams to make smarter decisions faster. Windsor.ai sits at the center of this transformation as an all-in-one data integration platform.

With the ability to connect to 300+ sources, push data into leading cloud data warehouses, and integrate natively with top BI tools, Windsor.ai streamlines your entire analytics workflow.

Ready to centralize all your data in minutes? Start your free 30-day Windsor.ai trial today and experience the full power of a modern data stack!

FAQs

What is the modern data stack?

A modern data stack is a modular, cloud-first architecture composed of specialized tools for each layer: data ingestion, transformation, storage, and analytics, all designed to replace legacy ETL systems.

What tools are used in the modern data stack?

Some of the most popular tools in the modern data stack for every layer are:

- Data integration: Windsor.ai, Fivetran, Airbyte, Stitch, Supermetrics

- Data warehouse: Snowflake, BigQuery, Databricks, Redshift

- Transformation: dbt, Dataform

- BI & analytics: Tableau, Looker, Power BI

- Reverse ETL: Hightouch, Integrate.io, Census

What’s the role of Windsor.ai in the modern data stack?

Windsor.ai is a data integration platform that connects to 325+ sources, delivering clean data to warehouses and syncing it to downstream analytics tools in minutes. It plays a fundamental role in the modern data stack, ensuring the accuracy, completeness, and freshness of the provided data.

Do I need coding skills to use Windsor.ai?

No! Windsor.ai is completely no-code. You can connect any data source to any destination and set up pipelines of any complexity in minutes with no programming.

Can I connect Windsor.ai to dbt and BigQuery?

Yes. Windsor.ai connects seamlessly to BigQuery, and you can use dbt to transform your data as needed. We also offer pre-built dbt+BigQuery packages for popular data sources.

Is Windsor.ai an ETL or ELT tool?

Windsor.ai does both. Our platform provides basic in-app ETL features, letting you create custom metrics, apply filters, and transform your data before sending it to your warehouse. You can also perform ELT directly in your cloud environment for faster and more advanced processing.

Windsor vs Coupler.io

Windsor vs Coupler.io