Google Analytics 4 is great for quick insights, but teams often hit limitations. The platform isn’t built for deep analysis at scale, historical access is limited (event data retention is up to 14 months), and it’s hard to combine GA4 with data from ads, CRM, sales, or product systems in one place.

That’s why many marketing and data teams export GA4 data to Google BigQuery. BigQuery is a scalable warehouse for raw GA4 events that can handle large volumes, power advanced SQL analysis, and support machine learning workflows without requiring a heavy infrastructure setup.

The key question is: what’s the best way to load GA4 into BigQuery in a clean format and keep it syncing over time? Some options are manual and require ongoing upkeep, while others are automated and scale as data grows.

In this guide, you’ll learn four proven ways to sync GA4 to BigQuery:

Method 1. No-code connector to automate extraction, loading, and updates

Method 2. Manual CSV export from GA4 with upload to BigQuery

Method 3. BigQuery Data Transfer Service to automate data ingestion and daily updates

Method 4. Custom scripts/GA4 data API pipelines for full control

We’ll break down each method, its pros and cons, and which one fits your reporting needs.

Things to know before moving GA4 data to BigQuery

The following are some important technical aspects you should know before starting to load GA4 data into BigQuery:

- GA4 API limitations

- Data schema and custom columns

These things directly affect the accuracy of your data, the reliability of your queries, and long-term maintenance in BigQuery.

GA4 API limitations

Most of the custom pipelines depend on the GA4 Data API, leading to these significant limitations:

- GAQL learning curve: Queries in GA4 use GAQL. So you’ll need a basic knowledge of its resources, dimensions, and metrics before you can work with GAQL comfortably.

- Rate limits: The API is quota-based, so requests may slow down. Your pipeline must handle retries and use proper backoff.

- Data latency: Event data doesn’t always appear instantly, which can throw off dashboards that require near-real-time updates.

Data schema and custom columns

GA4 uses an event-based, nested structure, which adds complexity when you bring the data into BigQuery:

- Nested data: GA4’s nested fields often show up as RECORDs or ARRAYs, so you’ll need to break them out beforehand.

- Custom column logic: Custom columns come in as key-value pairs, which means you’ll need a steady mapping in place before loading the data.

- Joins across report types: When joining events, users, and conversions, you need a cleaner, normalized schema to avoid duplicates and keep the data aligned.

Supported GA4 data types & formats for BigQuery

Before you export GA4 data into BigQuery, make sure your output format and schema match what BigQuery can reliably ingest:

Supported file formats: ORC, Avro, Parquet, CSV, and NDJSON. For heavy workloads, Parquet or Avro usually performs best.

Compatible data types: GA4 fields must map to BigQuery-supported types:

- STRING: campaign or page names

- INTEGER: counts like events or users

- FLOAT: metrics like session duration

- BOOLEAN: flags or status fields

- STRUCT: nested objects

- TIMESTAMP: event timestamps

GA4 data extraction: tools, APIs & setup

You can extract Google Analytics 4 data in several ways, from simple exports to fully programmatic pipelines.

The most common tech stack for GA4 data extraction includes:

- Extraction tools and SDKs: Official GA4 SDKs for Python, Java, Node.js, and PHP simplify authentication and requests.

- GA4 API & GAQL: Programmatic access lets you query specific resources and pull exactly the metrics and dimensions you need.

- Authentication & Security: There is a need for OAuth 2.0 and developer tokens. Pipelines must automatically refresh tokens and safely store credentials, so the data keeps moving without interruption.

Limitations of BigQuery Data Transfer Service (DTS)

To automatically import GA4 data into BigQuery, you can use Google’s native BigQuery Data Transfer Service. This tool handles the integration smoothly, but brings some significant limitations that you must be aware of:

Daily refresh only

The most critical limitation is the fixed refresh schedule. DTS only runs syncs once per day, so your GA4 data shows up a day late. Because no intraday updates are supported, you can’t use DTS for real-time reporting or quick decision-making.

Limited customization

DTS offers little flexibility in selecting fields or filtering data before export. Google automatically defines table and column names. As a result, you may import unnecessary data, increasing storage costs and cluttering your dataset.

No transformations

DTS also doesn’t support data transformations. All data cleaning, conversion, and normalization can be handled only in BigQuery after loading. This increases the workload and query cost, making the entire process heavier.

No schema control

In DTS, the GA4 data schema is fixed, so you can’t change it to fit your needs. Anything custom, new metrics, repeated fields, or nested data has to be cleaned up afterward, which makes analysis longer and more complicated.

Cost and scaling issues

DTS works smoothly with small exports. But frequent updates or large datasets increase the storage and query costs quickly.

⚙️ Windsor.ai is a reliable alternative to DTS that helps avoid these limitations. With a no-code ETL/ELT setup, you can automate GA4 ingestion into BigQuery, schedule near-real-time or custom refreshes, and keep pipelines stable with automatic data mapping and built-in monitoring.

Top methods to integrate GA4 data into BigQuery

Method 1: No-code automated GA4 to BigQuery connector (Windsor.ai)

- Prerequisites: BigQuery project, Windsor.ai account, GA4 property with the required permissions

- Integration tools used: No-code ELT/ETL platform (Windsor.ai)

- Type of solution: Fully managed, automated, no-code data connector.

- Best suited for: Non-technical marketing and business teams, dev-first data engineering teams, and digital agencies that need a fast setup, consistent GA4 reporting, and scalable pipelines.

- Limitations: Full features are available only with a paid Windsor plan. You can still use Windsor for GA4 to BigQuery syncs on the Forever Free plan.

Handling GA4 to BigQuery sync manually takes time, requires custom scripts, and often breaks when APIs or schemas change. Windsor.ai automates the full integration, so you can get GA4 data into BigQuery in under 3 minutes without ongoing engineering maintenance.

Your GA4 data lands in BigQuery in a clean, structured format that’s ready for reporting, dashboards, and deeper analysis.

Key processes automated by Windsor.ai:

- Data extraction from one or multiple GA4 properties and accounts

- Normalization and standardization of metrics and dimensions

- Incremental loads (new data only) or scheduled batch updates

- Scheduled refreshes (every 15 minutes, hourly, daily, or custom)

- Historical backfills for past GA4 events

- Monitoring, error handling, and automatic retries

- BigQuery table optimization (partitioning and clustering)

- Multi-property and multi-account setups

- Data blending with 325+ sources, including Google Ads, Meta Ads, Shopify, HubSpot, TikTok, etc.

- Data integration into AI tools (ChatGPT, Claude, Gemini, etc.) via MCP for downstream analysis

How it works:

Windsor enables GA4 to BigQuery integration in three simple steps:

- Connect your GA4 account(s) to Windsor.ai.

- Configure your dataset by choosing the relevant metrics and dimensions (select from 2,000+ fields) and date range. Optionally blend data from other sources.

- Select BigQuery as your destination and create a destination task (confirm the query, set a refresh schedule, and apply backfilling, partitioning, or clustering if needed).

Once activated, Windsor starts delivering your GA4 data to BigQuery and keeps it updated on your schedule. You can then analyze it directly in BigQuery or connect it to BI tools and AI chats for dashboards and deeper insights.

🚀 Try your first GA4 to BigQuery integration through Windsor.ai with a 30-day free trial.

Method 2: Manual CSV export & upload

- Prerequisites: Google Cloud BigQuery project, GA4 property.

- Integration tools used: CSV file.

- Type of solution: Fully manual workflow.

- Best suited for: One-time uploads with small datasets.

- Limitations: Not scalable, error-prone, and requires repeating the process whenever you need fresh data.

You can manually export GA4 data as CSV and upload it to BigQuery. It’s free and straightforward for one-time analyses or small datasets.

But for ongoing reporting, especially with large volumes or frequent refresh needs, this method doesn’t fit. Manual exports take time, lead to inconsistencies, and make it hard to keep dashboards reliably accurate and up-to-date.

How it works:

Step 1: Export your GA4 report:

- Sign in to GA4 and open the report you need.

- Set your date range, pick your metrics and dimensions, and export the file as a CSV.

Step 2: Open your BigQuery project:

- In the Google Cloud Console, open BigQuery and choose the project and dataset where the data should be inserted.

Step 3: Upload the CSV to BigQuery:

- Click Create table to create a new table.

- Choose Upload, and select your CSV file.

- Use auto-detect or define the schema yourself, then click Create to load the data.

Keep in mind these limitations associated with the manual upload method:

- There are no scheduled or incremental updates.

- You should repeat this process every time to get fresh data.

- Large CSV files can cause performance issues.

- Errors during export or upload can break the pipeline.

⚡ For teams that need reliable, repeatable updates, Windsor.ai automates GA4 to BigQuery syncs and eliminates the manual overhead.

Method 3: BigQuery Data Transfer Service (DTS)

- Prerequisites: Google Cloud BigQuery project, GA4 property.

- Integration tools: BigQuery Data Transfer Service.

- Solution type: Automated GA4 BigQuery pipeline with minimal customization.

- Best for: Teams that want a simple, Google-built setup without extra tools and are ok with daily updates and default fields.

- Limitations: Only refreshes once a day, fixed schema, no custom field selection, no transformations before loading.

BigQuery Data Transfer Service is a native Google tool to send GA4 data to BigQuery. It uses Google’s built-in schemas for exports, freeing you from manual scripts.

How it works:

- Open BigQuery in the Google Cloud Console and select the Data Transfer option.

- Click Create a transfer.

- Choose GA4 from the list and connect it.

- Fill in the transfer details, such as the refresh window, service account, and name.

- Save the job, and DTS will handle the GA4 data upload.

Keep in mind:

DTS is simple to set up, but it comes with trade-offs. The schema is locked, so you can’t pick the fields you want or rename anything.

It refreshes only once a day, making real-time reporting impossible. It can’t clean your data, combine it with other sources, or manage several GA4 properties smoothly.

💡 If you need more flexibility and customization as well as more frequent updates, Windsor.ai is your best fit.

Method 4: Custom GA4 Scripts/GA4 API → BigQuery

- Prerequisites: Google Cloud BigQuery project, access to GA4, Google API developer token.

- Integration tools used: Google Cloud Functions or Cloud Run, the BigQuery API, the GA4 API, GAQL, and GA4 scripts.

- Type of solution: Custom code-based setup.

- Best for: Dev-led teams that need full control over data, custom fields, or very specific reporting requirements.

- Limitations: Requires strong coding skills and continuous monitoring and maintenance. API or schema changes break the pipeline. Managing multiple GA4 properties adds extra complexity.

This approach gives you full control over your dataset when connecting Google Analytics 4 to BigQuery. You write your own scripts or API code to pull GA4 data with GAQL and push it into BigQuery through REST or client libraries.

You’re building the whole ETL yourself: extraction, transformations, loading, scheduling, error handling, and monitoring. Ultimately, even a minor GA4 API or schema change can break a pipeline and require urgent fixes.

Additionally, you have to manage OAuth, rate limits, pagination, incremental loads, retries, and logs. Multi-property setups are harder to manage, and scaling the pipeline requires careful planning.

How it works:

- Use GA4 Scripts for lightweight, small-scale automation.

- Use GA4 API with GAQL for complex, multi-account pipelines.

- Authenticate via OAuth or a service account.

- Use GAQL to pull GA4 data and metrics.

- Organize the data so it loads smoothly into BigQuery.

- Push the data to BigQuery using the InsertAll or BigQuery’s client tools.

- Schedule automated runs via Cloud Functions, Cloud Run, or cron jobs.

This method is strong, but it takes extensive engineering resources and requires ongoing monitoring.

🎯 For teams seeking fully automated, highly customizable GA4-to-BigQuery pipelines with no code, Windsor.ai removes all technical complexities with scalable, ready-to-use connectors.

Step-by-step: How to connect GA4 to BigQuery via Windsor.ai

If you’re wondering whether you can really connect Google Analytics 4 to BigQuery with Windsor.ai in under 3 minutes, this guide will walk you through the exact setup step by step.

📝 Windsor.ai’s BigQuery Integration Documentation: https://windsor.ai/documentation/how-to-integrate-data-into-google-bigquery/.

Prerequisites:

- Active GA4 property

- BigQuery enabled on a Google Cloud Platform (GCP) account

- Windsor.ai account

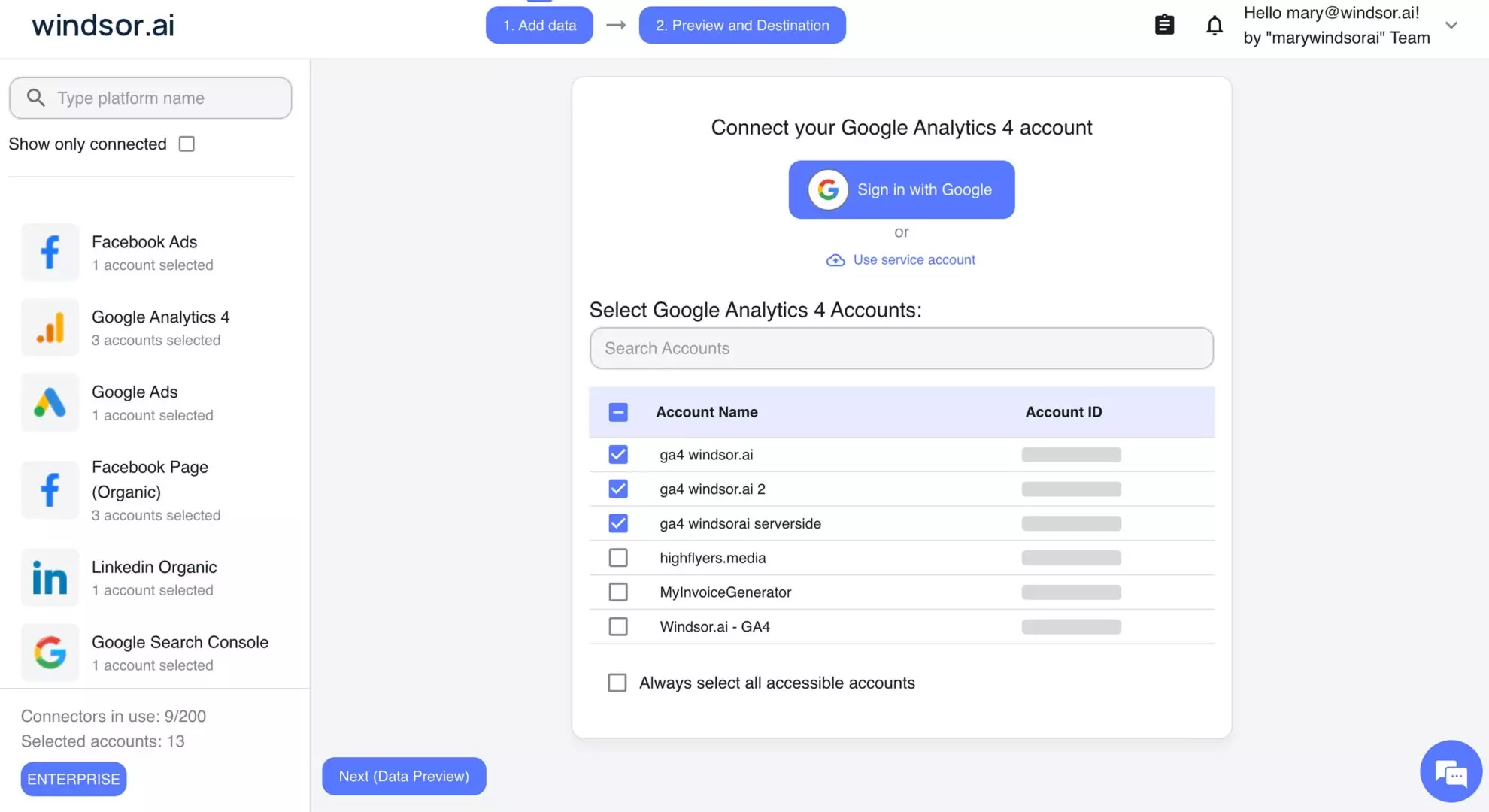

Step 1: Select GA4 as a data source

Choose Google Analytics 4 as your data source and authorize your account. Windsor.ai will connect all available GA4 properties.

Step 2: Configure your dataset

Customize the data that you want to pull by setting a date range and choosing from over 2,000 metrics and dimensions, including sessions, events, conversions, and user-level attributes. You can also blend GA4 data with other sources for cross-channel analysis.

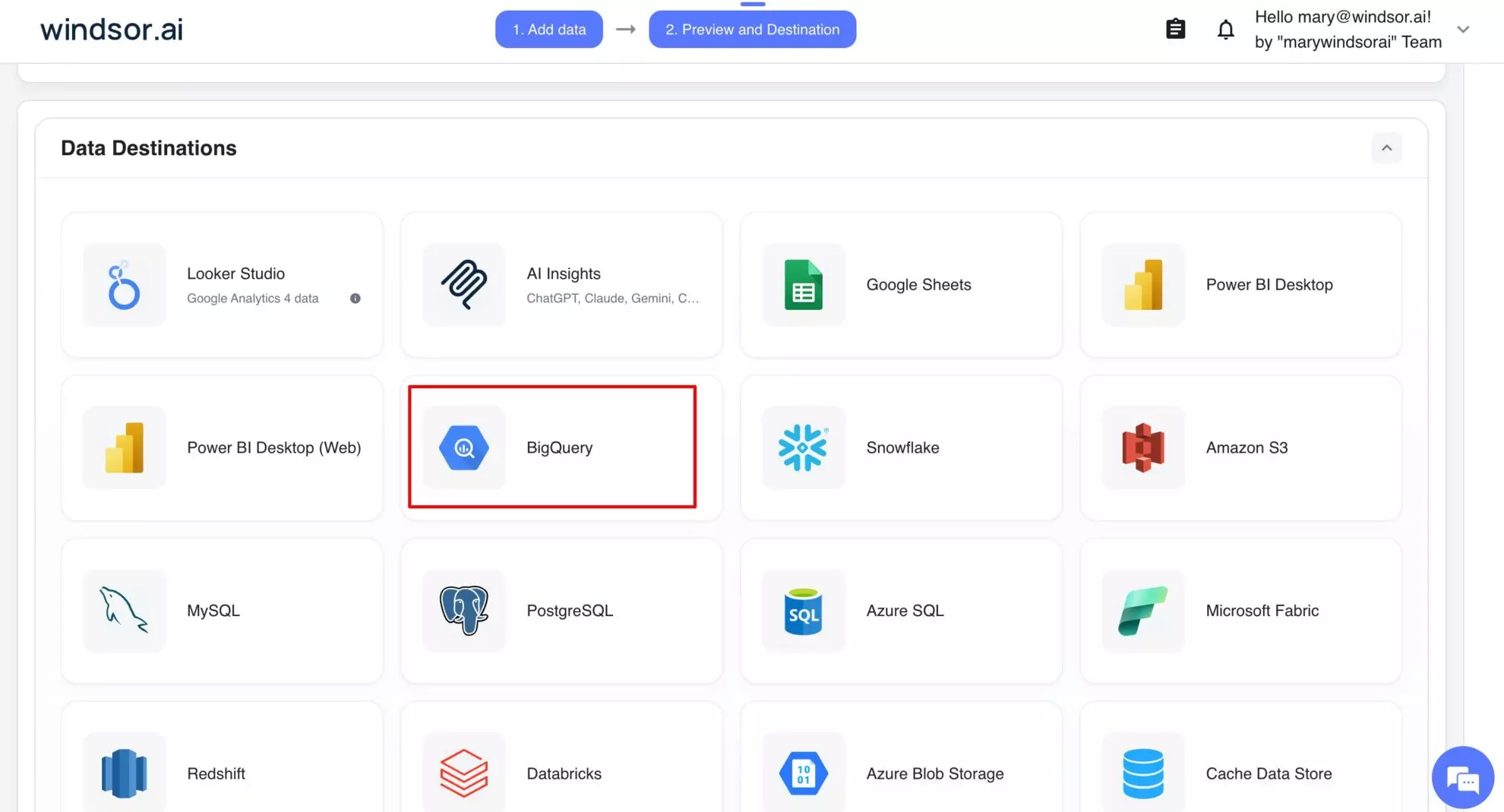

Step 3: Select BigQuery as a destination

Scroll down to the Data Destinations section and choose BigQuery.

Click Add Destination Task and enter the following details:

- Task name: Enter any name you wish.

- Authentication type: You can authorize via a Google Account (OAuth 2.0) or a Service Account file.

- Project ID: This can be found in your Google Cloud Console.

- Dataset ID: This can be found in your BigQuery project.

- Table name: Windsor.ai will create this table for you if it doesn’t exist.

- Backfill: You can backfill historical data when setting up the task (available only on the paid plans).

- Schedule: Define how often data should be updated in BigQuery (e.g., hourly, daily; available on Standard plans and above).

Note: While Windsor.ai auto-creates the table schema on first setup, it does not automatically update the schema when new fields are added or edited later.

Select advanced options (optional)

Windsor.ai supports clustering and partitioning for BigQuery tables to help you improve query performance and reduce costs by optimizing how data is stored and retrieved:

- Partitioning: Segments data only by date ranges (either by date column or by ingestion time, e.g., a separate partition for each month of data). Learn more about partitioned tables.

- Clustering: Segments data by column values (e.g., by source, account, campaign). Learn more about clustered tables.

You can combine table clustering with table partitioning to achieve finely-grained sorting for further query optimization and cost reduction.

When completed, click Test connection. If the connection is set properly, you’ll see a success message at the bottom; otherwise, an error message will appear with details. If successful, click Save to activate the destination task and begin GA4 data transfer to BigQuery.

Now you can monitor the task status in the selected data destination section. The green ‘upload‘ button with the status ‘ok‘ indicates that the task is active and running successfully.

Windsor.ai automatically handles dataset creation, table mapping, and schema normalization.

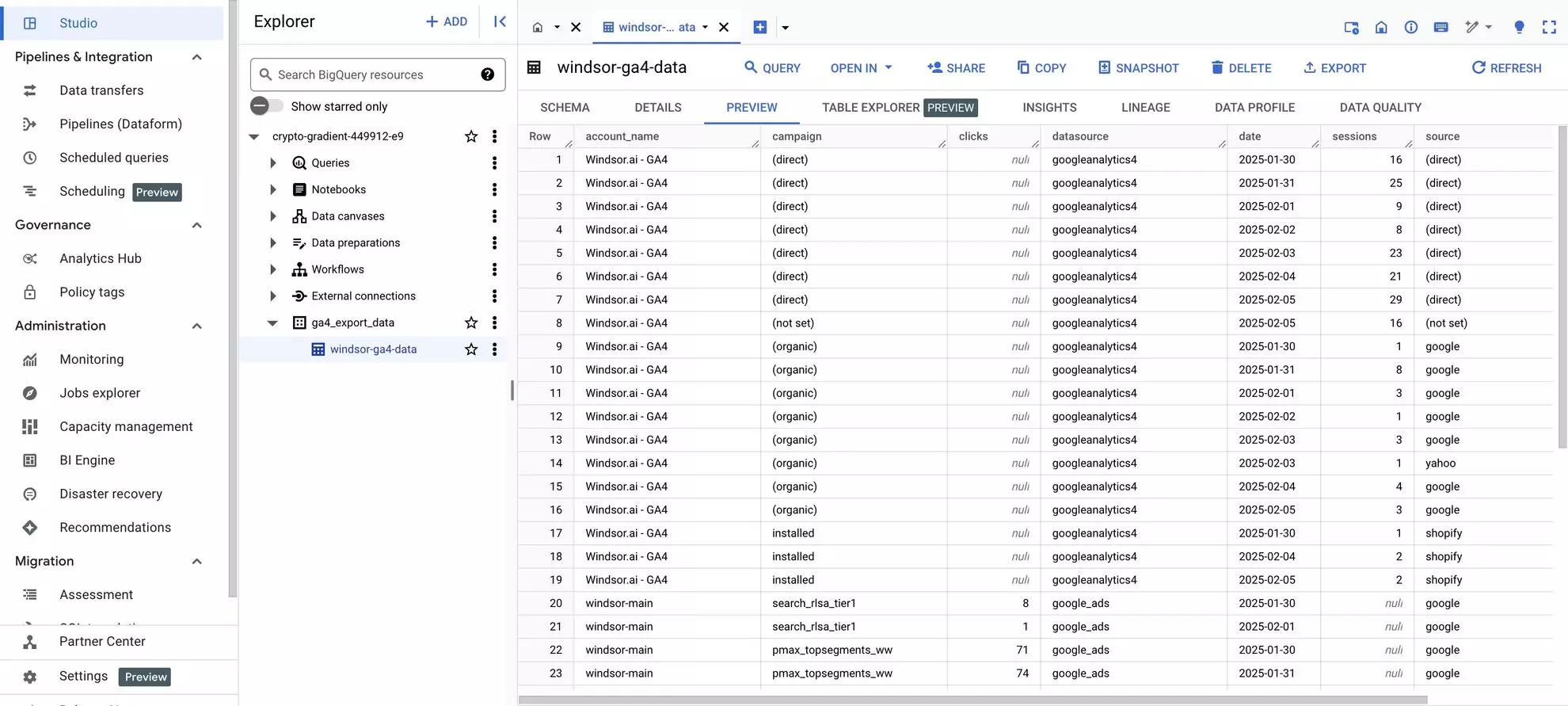

Step 4: Verify data in BigQuery

Open your BigQuery project and check the tables. You should see a new table created by Windsor.ai with your synced Google Analytics 4 data.

🚀 Windsor.ai makes the GA4 to BigQuery integration fast, reliable, and fully automated. It removes manual exports, enables near-real-time updates, and frees your team to focus on insights instead of pipeline maintenance.

Summary: Windsor.ai vs other integration methods

| Feature | Windsor.ai (no-code ELT/ETL) | Manual CSV export | BigQuery Data Transfer Service (DTS) | GA4 scripts/GA4 API |

| Technical skills | Minimal; no coding required | Basic spreadsheet skills | Basic BigQuery knowledge | High; coding in GAQL, Python/JS/Cloud Functions |

| Automation | Fully automated (incremental loading & scheduled syncs) | None; manual uploads only | Automated daily exports | Fully customizable; requires scripting and scheduling |

| Refresh frequency | 15-min, hourly, daily | Only when you upload a new CSV file | Daily only | Fully flexible; can implement near real-time |

| Scalability | High; multi-property and multi-account support | Low; large datasets are cumbersome | Moderate; limited by daily schedule | High, but requires robust engineering for multiple accounts |

| Customization | Full control over metrics, dimensions, and schema | None; fixed export | Limited, fixed schema and fields | Full control; custom schema, metrics, and transformations |

| Setup difficulty | Low; 2–3 minute setup | Low; simple CSV uploads | Medium; GUI setup, but no schema tweaks | High; build a full ELT pipeline from scratch |

| Ongoing maintenance | Minimal; system handles updates and errors & retries | High; repeat manual exports | Low, but limited flexibility | High; monitor API changes, handle errors, retries, and rate limits |

| Cost | Free or paid subscription | Free | Free, included in Google Cloud | High; takes developer time and cloud resources |

| Best use cases | Automated GA4 pipelines in BigQuery, multi-account reporting, advanced analytics | One-time exports or small datasets | Simple GA4 exports for basic reporting | Complex, highly customized pipelines for engineering teams |

Why load GA4 data into BigQuery?

Many teams send GA4 data to BigQuery to conduct deeper analysis and cleaner, unified reporting thanks ot these features:

- BigQuery acts as a scalable data warehouse, handling large datasets without lag.

- By sending GA4 events directly to BigQuery, you can get data in a structured, ready-to-query format.

- You can connect Google Analytics 4 to BigQuery for long-term analysis and historical reporting.

- With GA4 data in BigQuery, you can build dashboards in Looker Studio, Power BI, or Tableau.

- You can also join GA4 data with ads, CRM, or backend systems to get a full, cross-channel view.

- Engineering teams can use this integration for predictive analytics. Machine learning models can forecast conversions, churn, or lifetime value.

All in all, BigQuery helps you automate GA4 exports, analyze large datasets, create a single source of truth, and get comprehensive insights. It becomes the backbone of reliable, scalable GA4 reporting.

Conclusion

Syncing Google Analytics 4 to BigQuery unlocks deeper analysis, long-term data retention, and scalable reporting.

You can get GA4 data into BigQuery in several ways:

- Manual exports

- Native GA4 BigQuery export via Data Transfer Service

- Custom scripts

- No-code connector

Each approach works, but they vary widely in setup time and ongoing maintenance.

If you want a pipeline that stays reliable as data volume grows, an automated option is usually the easiest path. Tools like Windsor.ai help you connect GA4 to BigQuery, schedule refreshes, and reduce the overhead of manual exports and script maintenance, so your data stays consistently accurate and up to date.

🚀 Try Windsor.ai for free today: https://onboard.windsor.ai/ — and unlock the full potential of your Google Analytics 4 to BigQuery integration; no credit card required for the 30-day trial.

FAQs

What are the main ways to export GA4 data to BigQuery?

You can export GA4 data to BigQuery using: a no-code connector (Windsor.ai), manual CSV export, native GA4 → BigQuery export (Google-managed), or custom scripts via the GA4 Data API.

How can I automate GA4 exports to BigQuery?

For automation, use Windsor.ai (scheduled syncs + monitoring) or the native GA4 → BigQuery export via DTS for Google-managed daily/streaming delivery. Custom scripts can also be automated, but require programming skills and maintenance.

Can I send GA4 data to BigQuery in near real time?

Yes; near real-time is possible via Windsor.ai scheduled syncs and custom scripts.

When should I use the native GA4 → BigQuery export via DTS?

Use it when you want a simple, Google-managed pipeline that exports raw GA4 event data into BigQuery with minimal setup and no third-party tooling.

When does manual CSV export make sense?

Manual CSV export is best for one-time uploads, quick checks, or small datasets, but not suitable for ongoing reporting or frequent refreshes.

When are custom scripts the best option?

Custom scripts are best when you need full control: custom transformations, real-time scheduling, specific field logic, or highly tailored multi-property workflows, but assuming you can maintain the pipeline.

When should I use a no-code connector like Windsor.ai?

Use Windsor.ai when you want fast setup, frequent refreshes, less maintenance, and the option to blend GA4 with other sources before loading into BigQuery.

Which file formats does BigQuery support for imports?

BigQuery supports CSV, NDJSON, Avro, Parquet, and ORC.

Which GA4 data types are compatible with BigQuery?

GA4 fields commonly map to BigQuery types like STRING, INT64 (INTEGER), FLOAT64 (FLOAT), BOOL (BOOLEAN), STRUCT/RECORD, TIMESTAMP, DATE, and ARRAY.

How do I switch from manual export or scripts to Windsor.ai?

Connect GA4 in Windsor.ai, configure the fields/date range, choose BigQuery as the destination, and activate scheduled sync.

Windsor vs Coupler.io

Windsor vs Coupler.io